To forecast win totals we simulate the season a few thousand times, accounting for opponent, game location and byes. In general, we expect better teams to have more wins, obviously. But there is a lot more variation in those expectations than you might expect.

We can ask how many games a team *should* win, given how good they are, by looking at history. For example, Power-5 teams with a pre-season MP rating of +15.0 have historically won ~8 regular season games. And in fact our simulation tells us Georgia will win 8 games this year, given their schedule. But Miami and Florida, with almost identical ratings, are expected to win 8.5 and 7.6, respectively. So even though we’d make Miami-Florida a toss-up on a neutral field, we expect Miami to win ~1 more game this year.

We take these deviations – what we expect given a team’s schedule this year versus what teams like them have done historically – as our strength-of-schedule (SOS) measure. And it is expressed in intuitive terms – wins.

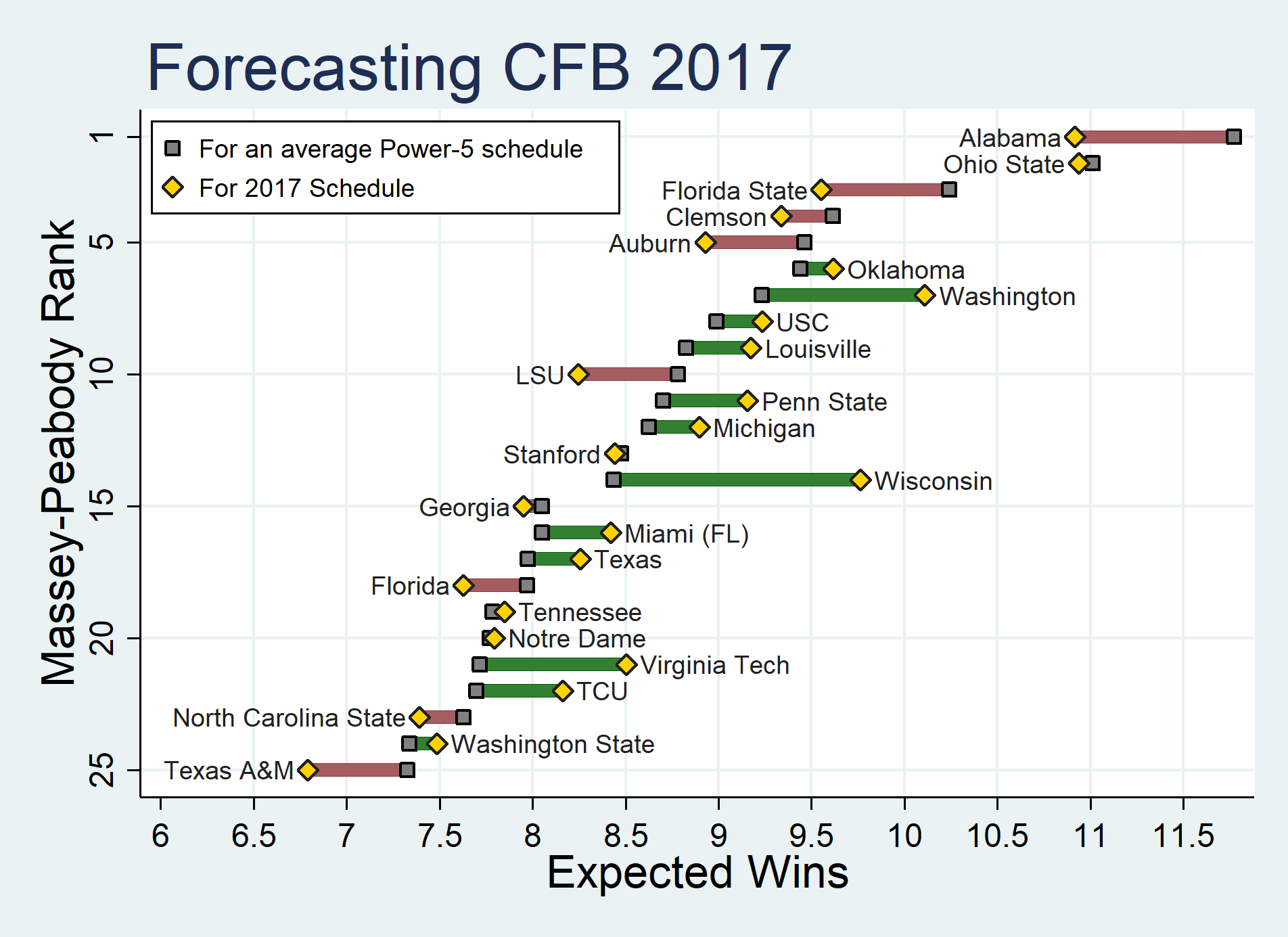

In the figure below we plot expected wins and SOS for our preseason Top 25. Teams are sorted 1-25 top to bottom, with gold stars showing how many wins we expect from them this year. With the gray boxes we also show how many games power-5 teams with that power ranking have historically won. The deviation between a team’s gold star and gray box indicate their strength of schedule, with red bars indicating more difficult than average SOS and green bars easier than average SOS.

What jumps out to you? Alabama’s schedule versus Ohio State’s. The cakewalk Wisconsin seems to have in the Big 10 West. The difference in Washington and Stanford’s schedule despite being in the same division. The tax on Ws you pay when you’re in the SEC West.

Easiest schedules in P5 conferences (impact on expected wins): Wisconsin (+1.3), Northwestern (+1.1), Washington (+0.9), Minnesota (+0.85), Kansas State (+0.8) & Virginia Tech (+0.8).

Toughest: California (-1.3), Maryland (-0.9), Alabama (-0.85), South Carolina (-0.8), Oregon State (-0.7), Florida State (-0.7).

One of the big takeaways is how much difference SOS makes. Especially among relatively similar teams. Among Top 25 teams, expected wins range from about 11 to 6.8. What determines where a team falls in that 4-win range? Of course team quality – as proxied by the MP ratings – explains part of it. But only about 2/3rds. About 1/3 of that variation comes from SOS. 1/3! That’s a big share. And is easily neglected. SOS is basically the team’s “situation”, and we know from decades of psychology that we neglect situation when evaluating performance. Hopefully this picture will keep it more salient.